Stan: Instruments are the only window into the process. Errors and incorrect ranges can result in inefficient and unsafe operation. We asked Glenn Gardner, Fluke product planner, to help complete our perspective on calibration by taking a look at future trends.

Greg: Stan and I enlisted the help of engineers who were proficient in the design, installation, checkout and start-up of electrical devices and got specialists to do the high-voltage stuff. We could focus on instrumentation. In the 1990s we saw the start of the decline of instrument engineers and technicians in the plants and the rise of groups for distributed control systems (DCS) and information technology (IT). Who is making sure the best instrument technology is used and maintained? Are the plant instrument engineer and technician becoming extinct?

Glenn: On the engineering side, the workload is often split between an instrument engineer responsible for the instrument management system, and a controls engineer who focuses on the DCS configuration and performance. On the technician side, workforce reductions have caused a trend towards hybrid "E&I" techs that maintain both automation and electrical systems, including drives and switchgear. Overall, the trend is towards generalism: Anyone working in automation is expected to understand the basics of asset management systems, data historians, DCS hardware and software, fieldbuses, power distribution, MCC's, networks, wireless, etc.

Stan: How are the technicians adapting?

Glenn: The instrument techs seem to be able to pick up how to support the electrical and power systems. Electrical techs are having a tougher time learning how to calibrate, configure and troubleshoot instrumentation systems.

Greg: I saw a disturbing trend where the emphasis was becoming more on moving data around than getting better data from better instrumentation and process control or better presentation and analysis of the data by better operator interfaces and data analytics. We ended up with deluge of data, and not knowing how good the data was or what the data meant. Some IT departments thought they should be responsible for the generation and use of data.

Glenn: The confusion seems to be lessening, with IT groups staying on the business technology (BT) side. It's noteworthy that the IT workforce tends to be fairly young, whereas the plant technician workforce in the USA and Europe is nearing retirement. I'm concerned about who will inherit the tribal knowledge required to maintain the plant systems. The inverse of this problem is present in emerging regions: There are plenty of young engineers and techs at process plants, but very few experienced mentors.

Stan: What are the trends in calibration practices from the decrease in resources and expertise?

Glenn: Spot checks (in-situ where possible) are used to make a one-point calibration. A zero adjustment is used to compensate for an offset between the transmitter output and a precision measurement at the transmitter (e.g., precision pressure gage near pressure sensor). A span adjustment requires the removal of the transmitter and a source to provide at least two operating values of the process variable measured.

Greg: The measurement errors seen in a control loop are more from offsets or zero shifts than from changes in span. Also, a zero adjustment can compensate for a span error for control at a setpoint. If there is poor control, the span error shows up as a change in process gain. Since there are much larger sources of nonlinearity, the span error is mostly a consideration when operating at vastly different setpoints. We take advantage of this understanding in pH measurements by taking a sample and using a lab meter at the pH measurement point to make an offset adjustment. To make span adjustments to account for changes in electrode efficiency would require removal of the electrodes and testing in buffer solutions, upsetting the equilibrium of the reference electrode.

Stan: How does this method fit in with a documenting calibrator?

Glenn: Spot check results and the criticality of the measurement are used to help determine when a full calibration should be done with a documenting calibrator.

Greg: What are the choices for a calibrating a temperature transmitter?

Glenn: We have three ways of doing temperature transmitter calibrations. First, we can use a thermocouple millivolt or RTD resistance source to calibrate the temperature transmitter output. Obviously, this does not test the accuracy or condition of the sensor. Second, we can pull out the sensor and insert it into a temperature bath and perform a full calibration. This method is much more common when the calibration is performed in the shop rather than the field. Third, some thermowells have a slot for the direct insertion of a reference probe that can be used to perform a single-point calibration in-situ.

Stan: What are the relative merits of field and bench-top calibration?

Glenn: Field calibration tends to be more efficient, causes less process exposure and minimizes loop downtime. Bench-top calibration enables visual inspection of the primary element and allows the technician to use more advanced sourcing equipment such as temperature baths and pressure controllers. In addition, the bench-top model is useful during plant outages as it allows the most skilled calibration technician to focus on calibrating an uninterrupted flow of devices at the bench top.

Greg: Since pH electrodes are especially fragile, references may take hours to days to equilibrate, and aging and coatings are best detected by an increase in response time, the best practice is to do pH checks and tests at the measurement location. For a response time test, the electrode can be removed and inserted into the lab sample after the pH of the sample was verified. Response times greater than 20 seconds indicate significant aging or coating of the glass. The aging is not visually perceptible, and seemingly insignificant coatings of a few millimeters may not be obvious.

Stan: How is the data managed?

Glenn: The best practice is to store calibration records in a dedicated calibration management system (CMS). A CMS makes it easy to trend as-found and as-left data, track calibration assets, identify past-due calibrations, and audit previous work. About a third of existing process plants use this method. Alternatively, results can be stored in Excel, in the Enterprise Resource Planning (ERP) system, or on paper. I'm not a big advocate of these latter methods. Excel files are very prone to human error in data entry, and ERP or paper-based systems do not easily enable proactive tracking and trending.

Greg: What about instrument management systems?

Glenn: Instrument management systems (IMS) are particularly useful for tracking configuration data on field devices. For supported intelligent devices, automated diagnostics can also be sent. Configuration files can be created in advance and downloaded upon device installation, which uncouples the installation and configuration tasks. Both instrument and calibration management systems are often available as modules in a broader software system category known as an asset management system (AMS).

Stan: Progress has been made in making the AMS accessible on operator screens so the information is not held captive in an instrument shop, but a lot more needs to be done to use the ever expanding diagnostic and analysis capabilities.

Glenn: Several vendors are working on fleshing out the various modules of an AMS, including areas such as vibration, thermography, thermodynamic efficiency, RCM/FMEA analysis, machinery reliability metrics, etc. It's definitely an exciting area.

Greg: The AMS SNAP-ON application for smart instrumentation has already proved valuable, as noted in the May/June 2012 Plant Engineering and Maintenance (PEM) article "Refined Monitoring." What are some of the other trends developing from smart transmitters?

Glenn: Smart transmitters will lead vendors to increasingly include digital communication protocols in their field calibrators, making them useful for both commissioning and calibration workflow. Some plants will favor purchasing large quantities of a few standard smart transmitters which they can configure on-site to save costs, while others will lean towards having the OEM custom pre-configure each transmitter.

Stan: What can be done to minimize spares?

Glenn: The greater configuration flexibility of smart transmitters means that a spare can be used for many different applications. This can translate to fewer on-site spares and faster delivery of those spares by the supplier.

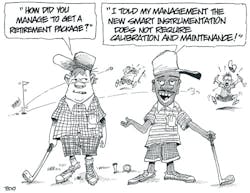

Greg: I see an opportunity to use wireless instruments to do spot checks that would automatically get the data into AMS. If an additional pressure, temperature or electrode process connection is provided, a wireless transmitter verified in the shop can be used as the precision measurement for the spot check. I particularly see the advantage of wireless for pH. Lab meters have the standard pH electrode temperature compensation (mv/degC), but generally do not have the unique pH solution temperature compensation (pH/degC) needed for each application stream. Wireless pH transmitters can also function as spares and lab meters for extended testing with process samples to determine life expectancy to find the best electrode technology and calibration schedule. For situations where sensors have limited life expectancy, or where a bad batch or false trip can cause a loss of several hundred thousand to millions of dollars, triple redundancy and middle signal selection should be used that can inherently ignore a single failure of any type. Finally, if your want to retire, use the online top ten list to make management think everything is wonderful.

Top Ten Ways to Impress Your Management with the Trends of a Control System

(10) Make large setpoint changes that will zip past valve dead band and local nonlinearities.

(9) Change the setpoint to operate on the flat part of the titration curve.

(8) Select the tray with minimum process sensitivity for column temperature control.

(7) Pick periods when the unit was down.

(6) Decrease the time span so that just a couple data points are trended.

(5) Increase the reporting interval so that just a couple data points are trended.

(4) Use really thick line sizes.

(3) Add huge signal filters.

(2) Increase the process variable scale span so it is at least ten times the control region of interest.

(1) Increase the historian's data compression so changes are screened out as insignificant.