In a Crisis, Are Machines Better than Humans?

Given the advances in technologies and work practices in oil refineries over the past 30 or 40 years, one would expect to see a decline in the number of major safety incidents and associated losses. That expectation would be wrong.

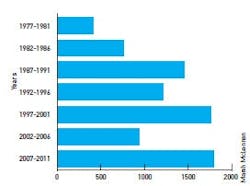

Figure 1. For all the advances in process automation capabilities, losses in refineries due to safety incidents continue to rise. Are operators to blame?

According to a 2012 report by the Energy Practice of Marsh Ltd., a division of insurer Marsh McLenna , the five-year loss rate (adjusted for inflation) in the refinery industry over the period 1977 to 2011 continued to rise, with incidents during start-ups and shutdowns continuing to be a significant factor (Figure 1). Why is this happening?Consider the realities of present day refinery operations:

- Modern control systems are highly effective and make plants safer;

- Modern control rooms provide protected havens for operators to perform their work;

- One operator can control substantially more equipment than was previously possible;

- Each operator has access to as much or as little information as wanted or needed.

Given all these benefits, why are there still problems? Some of these technological advantages can create their own hazards if care is not taken. For example, it is now possible to use hundreds of different colors on an operator’s screens and to alarm in multiple ways, so systems often are configured with displays or alarms that are inactive under normal conditions, but may become active unnecessarily under abnormal operations, causing operator confusion and possibly errors.

Plant operators need to get a clear picture of what’s happening in periods of crisis to avoid escalation of incidents. What often happens instead is that during an incident, operators can get too much information, and can become confused, especially when they’re under stress. For example, the incident report examining an explosion in 1994 at the Chevron Milford Haven, U.K., refinery said, "In the last 11 minutes before the explosion, the two operators had to recognize, acknowledge and act on 275 alarms.”

Start-ups and shutdowns of process units, grade changes and other operating transitions are considered normal operations, but they put operators under an increased amount of stress because they know these are the times when things could potentially go wrong. This is compounded when abnormal conditions exist, often causing inappropriate decisions that can lead to production incidents, injuries or even deaths.

A poorly configured system can confuse operators, but a well-designed system can help them. Creating the right balance of human and automation system capabilities depends on understanding how these two entities differ and what strengths each has in crisis situations.

Creating Disaster: BP Texas City Refinery

On March 23, 2005, an explosion and fire in the isomerization nit of BP’s Texas City refinery, which at the time was the company’s largest, killed 15 people and injured 170.

The incident report indicated several issues that operators failed to act on, such as a level alarm acknowledged in error, the heating ramp rate being too fast, and the fact that the operators tried to start the unit in manual when procedures indicated that, during early start-up, the only way to control splitter level was in automatic. All of these mistakes were possibly due to a lack of vigilance or a poorly designed control system, but they quickly became compounded as the incident unfolded.

The report cited issues with procedures as one of the causes of the incident:

- "…Failure to follow many established policies and procedures. Supervisors assigned to the unit were not present to ensure conformance with established procedures, which had become custom practice on what was viewed as a routine operation.”

- "The team found many areas where procedures, policies and expected behaviors were not met.”

The report recommended changes to start-up and shutdown procedures, but did not recommend additional training or procedure support from the control system. The incident could possibly have been avoided by the correct use of instrumentation, control and procedures, which we will consider in more detail later.

Figure 3. All control systems should include methodologies to evaluate and capture the creativity and initiative of a plant’s best operators.

Humans Do CountHaving several very skilled "operators” probably saved Qantas Flight 32 on Nov. 4, 2010. The equipment was an A380 Airbus, the largest and most technically advanced passenger aircraft in the world at the time. It had left Singapore for Sydney and was flying over Indonesia when one of the engines blew apart. (Figure 2 shows the extent of the damage.)The pilots were inundated with alarm messages: 54 came in to alert them of system failures or impending failures, but only 10 could fit onto the screen at a time. Luckily, there were five experienced pilots on that flight, including three captains who were on check flights. Even with that much experience on board, it took 50 minutes for the pilots to work through the messages to find the status of the plane.

The incident report said that without those pilots, it’s possible the flight would not have made it. But if the pilots had followed all the advice of the flight systems, the plane would have crashed. The most senior pilot told the others to read the messages, but to "feel” the plane. Working from this advice, they safely landed the plane with only one of its four engines in full working order.

Finding the Balance

Could we take the human out of the equation? Theoretically, yes, but the Qantas incident tells us that humans and automated systems both have a role to play in crisis situations. It is possible to run a process plant without operators and to fly a plane without pilots, but is it wise? Can we use the processing power of automated systems to guide humans and the deductive power of humans to make correct decisions when given a logical number of options?

Humans have emotions and often get stressed. In a crisis, some remain calm, but most would try to do everything, panic or just tune out. So when even the best operator is faced with many alarms coming in at the same time, and other things happening on all sides, he or she will likely try to look at as many things as possible, evaluate various scenarios and choose a solution. But this might take too long, and it would be much better if the system provided options and guidance — basic decision support.

Automated systems don’t fall asleep or ignore procedures, they don’t panic under pressure, and they can respond quickly to changes in conditions. But they can fail. When "trained” properly, they can analyze many hundreds of inputs, find patterns and offer refined suggestions to the operator. On the other hand, humans are perceptive, they have senses, they can evaluate pros and cons, and they can act on advice. There is a balance that combines the best characteristics of both to produce better outcomes.

Standards Support Decisions

Decisions are made by assessing problems, collecting and verifying information, identifying alternatives, anticipating consequences of possible decisions, and then making a choice using sound and logical judgment based on available information.

Few humans in a crisis are able to do this without help. They find it difficult to manage the situation to give them time to gather enough information to make a sound decision. But with decision support and guidance, their task becomes more manageable.

A decision-support system should be able to:

- Use historical data for what has happened in the past;

- Incorporate both data and models to offer the most likely options;

- Assist operators in semi-structured or unstructured decision-making processes;

- Support, rather than replace, operator judgment; and

- Aim at improving the effectiveness rather than the efficiency of decisions.

In the process industries, decision support of this nature is not yet widely available, but research is being conducted in key areas such as human-machine interface (HMI) design, alarm management and procedure management, so basic decision support can be provided to operators. To assist this effort, industry standards are either available or being developed.

The International Society for Automation (ISA), is developing three standards:

- ANSI/ISA18.2-2009 — Management of Alarm Systems for the Process Industries. This has been a standard since 2009 and provides requirements and recommendations for the alarm management lifecycle stages, including philosophy, identification, rationalization, detail design, implementation, operation, maintenance, monitoring and assessment, management of change and audits.

- ISA101 — Human-Machine Interfaces. This is still in development and at the committee stage. It’s being directed at those responsible for designing, implementing, using or managing HMIs in manufacturing applications.

- ISA106 — Procedure Automation for Continuous Process Operations. The committee will produce a series of technical reports to address procedural automation for continuous process operations. The aim will be to provide good practices to address many of the human performance limitations that can occur during procedural operations.

The Role of Procedures

The effective use of procedures is one of the key items in maintaining safe and reliable operations under all conditions. In fact, if configured correctly, well-planned alarms could initiate procedures in an abnormal situation, and a well-designed HMI could bring a developing incident to the attention of the operator in a timely and concise manner.

The airline industry is among the safest and most automated in the world. In fact, most modern aircraft would not be able to fly without the use of procedures that play a big part in the way aircraft are operated. For many years, pilots have had to follow procedures before, during and after a flight.

In the same way, the start-up and shutdown of a process is always guided by standard operating procedures (SOPs) designed to ensure the process is handled the same way each time.

However, these SOPs are sometimes modified by experienced operators, who believe they see a better way of doing things.

There are ways that these improved procedures should be evaluated and turned into current practices. The goal of operations and decision support is to capture the knowledge of the best and hopefully calmest operator on his or her best day under all conditions.

Figure 2. As sophisticated as the safety systems were on the Airbus A380, it took the intervention of five highly skilled pilots to land the plane safely.

Figure 3 depicts the methodology for capturing best practice procedures. The goal of this approach is to distill best operating practices, and find the right balance between fully manual, prompted manual and automated procedures. Once past that stage, new procedures should be documented and implemented, at which point they should be reviewed regularly for continuous improvement. Automating every procedure does not always provide the best solution, but then neither does manually executing every procedure.The best solution is to consciously examine events that caused production interruptions, then examine the procedures associated with those events, and determine what type of implementation will improve safety, health and environmental metrics for the facility, while providing the best economic return.

A modular procedure consists of logical steps (Figure 3) in which each operator has started with the same SOP, but has modified it to handle different situations and styles of operating by adding or removing steps. On the right-hand side is the resulting best-practice procedure, which evaluates and synthesizes elements from each.

Figure 4. Here's an example of an actual procedure in an electronic format, showing how the implementation of best practices can help improve control system operation.

Another simplified example of capturing a procedural best practice is shown in Figure 4. The original SOP was listed as:- Check base tank level LI100.PV>=50%;

- Start pump P-101 after correct suction header pressure is confirmed;

- Check answer-back flag;

- Confirm outside operator opened hand valve HV100.

However, after evaluation and consultation with the operations teams, best practices were added to the initial SOP flow chart. This is shown pictorially within the red, dotted boxes.

The SOPs may be updated and converted into electronic format, making the procedure documentation readily available to the operator. In this format, it’s easier to make the SOP a living document that can be continuously improved. Following this exercise, the company can then decide if this a best practice that can be deployed globally.

BP Texas City Revisited

Now let’s revisit the Texas City Refinery incident. In terms of a set of circumstances where the system could have potentially provided the operator with the correct information at the right time and possibly even suggested correct or prevented incorrect actions, this was the "perfect storm.”

A start-up procedure in the control system with inputs from several key measurements may have avoided this incident, or at least made things more manageable for the operators. The use of a procedure assistant could have helped unsure and overworked operators to take corrective action — or it could have taken action for them.

A procedural assistant could have given clear communications for all three units regarding:

- What had transpired during previous shifts;

- Next steps according to approved safety procedures; and

- Safety hazards associated with missteps.

Clearly some mistakes were made, but as things started to go wrong, several warning signs were missed due to the inexperience of the board operator and the lack of a system to interpret some clear measurement information that was available at the time. Although the high-level alarm on the bottom of the isomerization unit had been acknowledged and essentially ignored, there were enough indications from temperatures and pressures in the column that there was liquid high in the column, even above the feed tray.

There was temperature information throughout the column, plus indications of overheating in the stripper bottom, indicating things were not right. There was pressure information, and a rate of change alarm could have indicated that the heating ramp rate was too high. But the operators probably could not have digested all this information.

A procedure assistant could have triggered actions or prompts as a result of excessive liquid level and incorrect temperature and pressure readings. It could also have prevented the ramp rate being too high, and ultimately it could also have initiated a shutdown.

So, Are Automated Systems Better than Humans in a Crisis?

This discussion has shown that issues often exist with humans in the workplace during times of crisis and stress. In some cases, having the right people in the right place at the right time is sufficient, and often this is indeed the case. But process plants need to be prepared for those situations where the operator gets overloaded, takes things for granted or is inexperienced.

In times of abnormal operations, systems are configured to produce lots of data, but operators may not be able to handle or interpret this information. However, when presented with the right information in the right context during an abnormal condition, operators are able to do things automated systems can’t. Given logical choices, they can evaluate the situation and provide the thought process on actions to take, with the guidance and support of automated systems.

Maurice J. Wilkins, PhD, C.Eng, FIChemE, FInstMC, ISA Fellow, is a member of Control’s Process Automation Hall of Fame.

Latest from Safety Instrumented Systems

Leaders relevant to this article: