It was 1961 when I installed my first computer, which was full of vacuum tubes and was the size of a Volkswagen. If I remember correctly, it had 20 KB memory. In 1965, scientists at MIT's Lincoln Laboratory figured out how two computers could communicate with one another and the age of the Internet began.

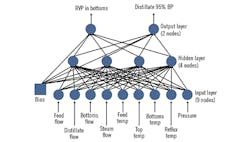

Later, we automation and control engineers came up with neural networks to control our multivariable control systems. This was done by predicting the physical properties of distillates on the basis of sending many measurements to the bottom layer of the back-propagation neural network, which then passed through a hidden layer in which the nodes had different gains and biases, predicting the distillates’ properties in its top layer. (Figure 1). In a way, this was the basis of sophisticated computer algorithms that evolved into the building blocks of artificial intelligence (AI).

These experiences with neural networks and model-based control (MBC), plus the ability of the computers to communicate, contributed to the evolution of AI algorithms and the Internet. This evolution was somewhat similar to the MBC control of distillation, but with one big difference: the evolution of the digital age (the evolution of AI and the Internet) is not yet under control.

So—you may ask—what does this have to do with Putin?

Internet: the double-edged tool

It seems to me that we as a profession share in the responsibility for the consequences of what we create. We contributed to the creation of a sophisticated tool that's evolving out of control. It's evolving in all directions it can and not only in the directions it should, and we automation and control engineers know that out-of-control processes are dangerous. Just as a knife is a useful tool in the hands of a cook making chicken paprikash, it's dangerous in the hands of a gangster. Therefore, its use must be regulated—not the knife itself, but its use. Similarly, the Internet is a double-edged tool, the use of which must be regulated.

Automation engineers know that the harmful consequences of the evolution of our digital age will accelerate if left out of control. We also know that under control, it can bring a better age to our planet. Today, we don't know if humans are capable of directing this evolution and protecting not only our physical, but also our cultural and moral environments.

Today, one third of mankind is connected to the Internet, and it's estimated that by 2030 some 30 billion devices will be connected to it. We live in an age when our dogs are on leash, but our Internet is not. Why? Well, there are obvious reasons: one is that for the Internet, we don't have a leash; we don't have a means to stop the "bad guys” from using the Internet to do harm. Second, the bad guys are just as smart as the good ones.

Yet, it remains up to society to stop those who would use the Internet for harmful purposes. It takes laws, law enforcement and stiff penalties to stop them. But, as of yet, we have no means to do so. We have limited capabilities to protect from cyber-attacks, but we have no reliable means to stop those who would use the Internet to do harm. We have no "leash laws" for the Internet.

On top of that, some of the "good guys" are opposing the introduction of such laws because they believe that the Internet is a form of free speech, and as such it should not be interfered with. (In a way, their view is similar to the U.S. Supreme Court, which held that giving money to political candidates is free speech.) So, let us take a look at what harm the out-of-control Internet can do to our cultural and physical environments—and what steps we can take to prevent it.

Impact on our cultural environment

In the past, the evolution of mankind was directed mostly by nature, but today we're staring to direct our own evolution. If mishandled, this can easily lead to devolution. Our children are spending seven hours a day staring at screens and just 16 minutes exercising. In other words, with the development of AI and the Internet, we've reached a crossroads. If we select the right path, if we bring the digital age under control, the road will take coming generations into a better future. But if we don't, if we take the road with the sign "dead end,” we can destroy our own civilization.

Instead of entertainment, education and other useful purposes, the Internet can also spread false information, including photos, videos and other types of misinformation. Protecting against these threats isn't only a technical challenge, but is also a legal one. Plus, without protection, this digital age can also eliminate human privacy, and open the door to surveillance and hacking. We may enter an age where our every move is followed—where all our activities are collected, analyzed and used to place us into different categories, so we can be sold like commodities. We can try to hide behind a dozen passwords, but the hackers can still reach our credit cards and bank accounts, or flood us with misinformation.

The Internet can also be used to spread propaganda, and thereby manipulate public opinion. The protection algorithms against disinformation are still in a primitive state; they operate only at the “keyword” level. This means that, for example, in attempting to filter out disinformation, social media platforms are only looking for statistical trends in word usage. These algorithms can't identify and block ideas, incitement or hate speech. And even if they could, we don't have clear legal definitions of what lies are allowable and which are not. In fact, the more lies we hear without the lies being penalized, the more used to lies in general we become, and the less upset we become when hearing another one. This process can lead to not trusting each other, our institutions and eventually democracy itself.

Impact on our physical environment

Other than global warming and nuclear proliferation, probably the most serious threats facing mankind are the consequences of hacking and cyber-attacks. I will not even attempt to list the many such incidents that have already occurred, but will just note that they range from the hacking of individual networks and computers to state-sponsored attacks on private or government institutions, industries or complete communication networks of whole nations—from Estonia to the Ukraine—by disabling their Internet servers.

In the latest case, hackers attached their malware to a software update package coming from SolarWinds, based in Austin, Texas. This company produces a network and applications monitoring platform called Orion, which is used by some 18,000 customers in the government and the private sector. The tainted software update packages were distributed between March and June in 2020, which also allowed the hackers to access the network of the cybersecurity firm FireEye. It took half a year just to discover that this occurred. It will probably take another six months to understand what has been taken and how much of that was top-secret material.

It borders on the ridiculous that we require government inspections and permits when building a family home, while a software update package can be distribute without any government inspection to make sure it doesn't contain malware. It borders on the criminal that the bill titled "Protecting cyberspace as a national asset" introduced in the U.S. Senate last summer still hasn't passed.

Once a worldwide network of laws, enforcement mechanisms and other cyber-attack prevention systems are established, we in the automation and control profession should and will share our accumulated knowledge in this field of Internet and cybersecurity. We must take immediate action, because otherwise President Putin will be right about what he said on Sept. 1, 2017: "Whoever masters AI and the Internet will become the ruler of the world."

About the Author

Béla Lipták

Columnist and Control Consultant

Béla Lipták is an automation and safety consultant and editor of the Instrument and Automation Engineers’ Handbook (IAEH).