Because microprocessors and software can run almost anywhere, they can simultaneously assist and take advantage of wireless and mobile technologies. These flexible, mutual benefits also enable edge computing to be applied in both small- and large-scale applications.

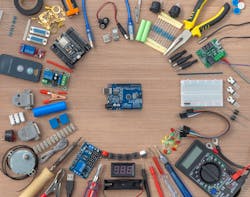

To pretreat and prioritize production information—and avoid sending too much data to a cloud-computing service—system integrator Patti Engineering in Auburn Hills, Mich., has been experimenting with lower-cost edge devices, such as Arduino’s open-source, single-board microcontrollers to collect input from simple I/O points on equipment, and do more control on the edge.

“Of course, PLCs still have a big place in plant-floor control, but legacy devices like these aren’t intended to talk to the cloud, and it’s difficult for the cloud to talk them,” says Sam Hoff, president and CEO at Patti, a certified member of the Control System Integrators Association. “To enable these communications without removing existing components, we’re using low-cost Arduino hardware to help legacy devices provide some data manipulation and concatenation on the edge. It’s important to start with a specific problem in mind and develop a solid functional specification for implementation. I’ve seen way too many examples of projects that go sideways because there isn’t a solid problem defined and specification produced for implementation.”

Drop-in computing stack

“Because edge computing is so fluidly defined, how it’s applied comes down to scale, workloads and objectives the user wants to execute. Edge computing is also location-specific, so its projects typically include sites in data centers, controls and plant operations, HMI and historians and servers, and other broad tasks that are typically prone to network time delays and latency,” says Devin Robertson, systems analyst for OT infrastructure and security at Interstates. “Edge computing is also typically performed by granular, line-specific devices that need to do cybersecurity-related operations. So, because they’re often on isolated networks, they may need to move further up in the network hierarchy to allow communications and relay their data.”

Robertson reports the CPG manufacturer previously had wide variations in its OT computing infrastructure. They had no prior standardization between dozens of facilities worldwide, and each plant was responsible for sourcing, managing and maintaining its own OT computing. These functions consisted of all process controls required to run each plant, including PLCs, DCS, HMI, servers, and supporting security and maintenance tasks.

“Some facilities managed their OT computing well, and had capable servers and local control. However, other plants had patchworks of onsite servers doing different things, so the CPG company undertook a corporate initiative to standardize, and asked Interstates to work with them to design an architecture for an end-to-end, drop-in computing solution,” explains Robertson. “We started by using industry-standard design documents, and integrated those concepts with our own ideas on where we wanted to take this solution. Then, we worked to develop and build a proof of concept (PoC) in a lab environment. Its primary components include Dell’s PowerEdge servers, Cisco’s Ethernet networking, and VMware’s virtualization and storage technologies, such as vSphere and Virtual Storage Area Network (VSAN). All of this is managed by various software packages on top, including VMware’s vCenter software and Dell’s Open Management software.”

What should stay on site?

Robertson adds that each plant retains its own server rack, and its existing sensors are still networked to its PLCs. The new OT computing stack at each plant only manages controls for that site, while Interstates also consolidates data from each of them into a standardized, central management plane.

So how does Interstates and its CPG client decide which data processing applications to run on its local computing stack and which to run in the cloud? Robertson reports that, if a workload or process is necessary for a production process to run safely under normal conditions, then it should happen at the plant.

“If you’re running a critical process in the cloud and it requires a wide area network (WAN) connection to talk to OT hardware such as PLCs, then you couldn’t run it if that WAN was interrupted. You’d also be wasting the process on bad product, and risking safety issues or damage to machinery. For example, if you have an active directory for logins or authenticating staff, and its domain controller is in an IT or OT cloud that loses its WAN link, then users and service accounts could quickly run into authentication issues,” says Robertson. “However, with standardized OT computing and a read-only domain controller at the plant, if there’s an outage in the WAN link, then users can keep their processes and operations running, and report up to the cloud when the WAN is back up. Anything a plant needs to make product, and do it safely and securely, should be at the plant. It may be 2024, but outages are still a major issue for many facilities and companies.”

Robertson adds that implementing Interstates’ drop-in OT computing solution benefited its CPG client by standardizing its hardware and software deployments, simplifying its management and licensing tasks, and improving its cybersecurity with standardization that reduces its risk footprint and provides a clearer path to remediating vulnerabilities.

“We replaced a hodgepodge of inconsistent hardware and software with consolidated and controlled server models, along with new procedures that provided more predictable and auditable access control,” says Robertson. “This makes life easier for the client’s dedicated team that supports its deployed solutions. Plus, plant personnel can offload server infrastructure maintenance to that team, which is something they’re happy to get off their plate. And, standardizing the server infrastructure into a handful of predictable devices shrinks the client’s overall security exposure. Where they used to have 20 different server models that each needed unique updates, they now have just one to three models to update monthly or quarterly, which is much cleaner. Even though commitment is the biggest hurdle that any enterprise will run into with this type of project, it’s crucial to lean into standardizing your OT infrastructure to make it easier to configure, maintain, and operate processes, while reducing as much risk as possible for the enterprise.”

This is part five of Control's January/February cover story mini-series. Read the other installments here.