By Walt Boyes, Editor in Chief

We plan cover stories fairly far in advance. This was supposed to be a story of the convergences going on in safety systems in the process industries. Then the story was, as they say, overtaken by events. We will still look at those convergence trends, but we have to do it in a whole new light.

On Friday, April 2, 2010, at 12:30 a.m., employees of Tesoro Corp.'s Anacortes, Wash., refinery were starting up the naphtha unit after it had been down for maintenance. The unit caught fire and was seriously damaged. Seven workers were killed. The refinery's capacity to make unleaded gasoline was reduced by two-thirds.

Then, on April 5, 2010, in the Massey Energy-owned Upper Big Branch Mine near Montcoal, W. Va., an explosion killed 29. There also was a much larger mining catastrophe recently, killing over 100 miners in China, as well as another refinery problem in Gujarat, India.

Most recently, BP's Deepwater Horizon oil rig caught fire on April 21. Eleven workers are missing and presumed dead, and the environmental impact remains unclear.

Ever since the Producers and Refiners refinery in Parco, Wyo., exploded in April 1927, with a loss of 18 lives, the process industries have been faced with a continuing series of refinery, chemical plant, mining and even food plant disasters, which continue to happen with distressing regularity. Including mining, such as the Massey Coal explosion, there have been over a dozen accidents in process industry plants just since the first of the year, requiring shutdowns and causing injuries and some fatalities.

This is made worse by the knowledge that at least since the Buncefield explosion and fire in the U.K. and the BP Texas City disaster, both in 2005, end-user and vendor companies alike have been trying to understand what causes these accidents and seeking actively to prevent them. Based on the record, we haven't had a lot of success.

Some people simply shrug and point out that petrochemical plants aren't called "boom factories" for no reason. Others have elected, as apparently the management of Massey Coal did, to delay or defer installing the appropriate safety systems. But still others have been working very hard to develop ways to prevent accidents like Buncefield and BP Texas City.

There have been several approaches to preventing accidents. There has been a global drive toward using safety instrumented systems (SIS) that are designed and maintained to shut plants down in the event of failures. But SISs haven't stopped the accidents, even where they have been shown to be working. One of the most important findings in studying the Buncefield and Texas City accidents was that the operators were inundated by alarms. So EEMUA, the Engineering Equipment and Materials Users Association (www.eemua.co.uk/), the Abnormal Situation Consortium (www.asmconsortium.net/Pages/default.aspx), ISA18 (www.isa.org), and the Center for Operator Performance (http://operatorperformance.org/) have focused on HMI design and alarm management. But alarm management hasn't stopped the accidents.

The vendor community has moved to incorporate the safety systems into the basic process control system (BPCS) interface—even while maintaining some separation—to provide a uniform engineering package, design interface and operator HMI so that operators in emergency situations will not have to interpret data coming to them in different formats. But a converged operator environment has not stopped the accidents.

So there is considerable controversy about what to do to prevent the deaths and injuries that are so regularly occurring in the process industries.

A Controversial Convergence of Systems

The strongest movement toward stopping the nearly continuous stream of accidents is the convergence of systems. Fire and gas safety systems are being incorporated into SISs, and alarm management systems have been redesigned and respecified (See the EEMUA and the ASM Consortium guidelines, and the new ISA18.2 standard, for example.). But is this convergence a good thing, and if it is, is it enough?

John Rezabek, process control specialist at ISP Corporation (www.ispcorp.com) in Lima, Ohio, doesn't think so. "They are separate efforts and disciplines that need to be done well," he says. "Alarm management is an endeavor to help the humans function on a higher level with better information (and therefore more safely). SIS is an effort to design an autonomous interlock to save the humans from themselves when all else fails."

Todd Stauffer, director of alarm management services at exida (www.exida.com) a major safety consultancy, disagrees. "The disciplines of alarm management and functional safety have always been interconnected. The release of the ISA18.2 standard in June of 2009 has accelerated the pace of convergence and is leading practitioners to take steps to treat these two disciplines holistically."

Nicholas P. Sands, process control engineer for E. I. DuPont, in Wilmington, Del., and co-chair of the ISA18 Alarm Management Standard says, "My opinion is that there are some convergences in safety thinking. I think the adoption of the performance-based approach to safety systems is changing some long-held prescriptive views, especially around burner management systems, and I think that is a good thing."

Carl Moore, senior instrumentation engineer, SIS, at Mustang Engineering (www.mustangeng.com), one of the world's largest control system integrators, sees the advantages of convergence, but says. "The current thinking and method of implementation for a number of oil-and-gas companies offshore is to have an independent fire-and-gas (F&G) system with a SIL 3 logic solver, an independent emergency shutdown (ESD) system (with another SIL 3-rated logic solver), an independent process shutdown system (SIS) with yet another SIL 3-rated logic solver, and an independent BPCS. Fire detection and hydrocarbon leak detection are implemented in the F&G system, which must pass this information on to the ESD system for trip or open action."

This is more than a little clunky. "By combining the F&G system with the ESD system, you eliminate one level of passing 'vitally needed information' along to another SIS type system," Moore says. "I don't see combining F&G or ESD with the process shutdown SIS system, however."

There are clear arguments for convergence of these systems. Scott Hillman, director of marketing at from Honeywell Process Solutions (www.hps.honeywell.com) who is a subject matter expert on fire and gas safety as well as SIS, says, "I think they will continue to converge because it's more beneficial for the plant operator and others involved in actual operations to have the right information at their fingertips to make decisions. Having that information is contingent on two things. First, the operations personnel need to get actual information instead of just raw data, as a lot of raw data already exists. So the integration has to be intelligent enough to provide context-specific, actionable information. Two, it is contingent on the end users and their work processes."

Charles Fialkowski, Siemens Industry's safety system product manager says, "I see these convergences as advantageous and think they will promote increased safety and security. As systems become more and more integrated, the need for a proper blend of industry codes and standards to converge will also become more important."

Simon Pate, director of projects and systems at Detector Electronics Corp. (www.det-tronics.com), agrees with Fialkowski's last point. "In my opinion," he says, "one of the issues with the F&G systems that is often overlooked is the legislative requirements." There are different requirements in many different jurisdictions. Pate continues, "So it is fine for a process safety expert to design a fire and gas system to an SIS requirement, but he must also consider the legislative requirements that fire and gas systems are required to meet."

In some cases, legislation is lagging behind technology in the safety arena.

It Isn't the Systems. It's the Culture.

Figure 1. Stills of video footage of the Tesoro Refinery at Anacortes, Wash., shot after the fire was knocked down. Courtesy of KIRO Eyewitness News, Seattle, www.kirotv.com.

What's the biggest single issue hindering safety and security implementation in the process industries? Scott Hillman says, "In a word, people."Is the convergence process with systems advantageous? "I really do think so," says Marcelo Mollicone, technical manager for SYM Consultoria of Camaçari, Brazil. "Safety culture is very important to achieve a safe process. Safety is not only related to SIF or SIS. It is a broad concept that should be on the mind of all company personnel. The convergences help to 'spread the word' as getting more people around the concepts."

But Rockwell Automation (www.rockwellautomation.com) safety guru Paul Gruhn says it isn't the equipment or systems that will really improve safety. "As [British chemical safety expert and author] Trevor Kletz has said, 'All accidents are due to bad management.' Reading the Baker Panel report on the BP Texas City accident reinforces the idea that safety must come from the top down. If management does not believe in the benefits of safety, even though study after study has shown that productivity improves when safety improves, then a culture reinforcing safety and security will not develop."

Emerson Process Management's (www.emerson.com) safety systems product manager Mike Boudreaux says, "Successful experience going unprotected can be the biggest obstacle for change" in the corporate culture. He continues, "It is so easy to fall into the trap that 'we've been doing it this way for so long, and nothing bad has happened…" This is precisely what happened at BP. The Chemical Safety Board and the Baker Panel reports indicate that the start-up of the isomerization unit had been done exactly the same way more than fifteen times with no adverse effects. What had really been happening is that the vapor cloud had dissipated before anyone applied a spark. Once there was an ignition source, the explosion was a certainty."

Boudreaux goes on to point out, "When industry incidents do occur, the results of incident investigations are sometimes kept secret in order to avoid damage to the company's public image or protect against lawsuits from injured parties."

An example of this is the accident that killed two operators at Bayer Crop Science in West Virginia in 2008. When called to testify before Congress, William Buckner, President and CEO of Bayer Crop Science, said, "…in January 2009, there were some in company management who initially thought that the Maritime Transportation Security Act of 2002, 46 U.S.C. Chapter 701, could be used to refuse to provide information to the CSB about issues regarding methyl isocyanate (MIC) beyond those related to the MIC day storage tank in the unit involved in the incident. We admit that." According to the CSB report, Bayer also managed to lose major pieces of evidence and cleaned up the event site before allowing CSB investigators onsite.

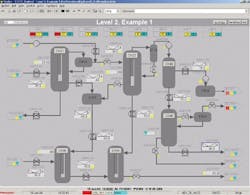

Figure 2. Clean and clear HMI screens for operators are part of the EEMUA, ASM Consortium and ISA18.2 standard efforts to better enable operators to respond effectively to alarms.

The Problem of Complex Systems

Donna Kasuska, a chemical engineer with Pegasus Group Integrated, and ChemConscious Inc. (www.everydaychemicals.com) in Downingtown, Pa., says, "Sophisticated control systems can significantly reduce plant failures by eliminating or improving the human interface. But even the most sophisticated system has to first be considered by humans, and is also subject to use and maintenance by humans."

Large automation and control systems are what are called complex systems. Complex systems do not behave the way simple systems do. A simple system says, for example, that cause A leads to effect B. Not doing A means that B does not happen. A complex system has so many dependencies and interrelations that it is not possible to predict accurately that A will always lead to B. Combinations of causes and combinations of effects make the behavior of complex systems often non-linear. Complex systems often behave in ways more predictable by chaos theory than by a linear engineering model. "Understanding these systems and analyzing or accurately predicting their behavior is often difficult," say Karen Marais and Nancy G. Leveson, from MIT, in their paper "Archetypes for Organizational Safety." Leveson served on the Baker Commission investigating the BP Texas City accident in 2005.

"We are seeing a growing number of normal, or system, accidents which are caused by dysfunctional interactions between components, rather than component failures. Such accidents are particularly difficult to predict or analyze. Accident models focusing on direct relationships among component failure events or human errors are unable to capture these accident mechanisms adequately," Marais and Leveson continue.

Their paper goes on. "One of the worst industrial accidents in history occurred in December 1984 at the Union Carbide chemical plant in Bhopal, India. The Indian government blamed the accident on human error in the form of improperly performed maintenance activities. Using event-based accident models, numerous additional factors involved in the accident can be identified. But such models miss the fact that the plant had been moving over a period of many years toward a state of high-risk where almost any change in usual behavior could lead to an accident."

So what happens when a plant, after an accident, for example, institutes safety actions? "Well-intentioned, commonplace solutions to safety problems often fail to help," Marais and Leveson point out. "They have unintended side effects or exacerbate problems. A typical 'fix' for maintenance-related problems is to write more detailed maintenance procedures and to monitor compliance with these procedures more closely."

David Strobhar, president of Beville Engineering (www.beville.com), the director of the Center for Operator Performance, has a similar comment. "I recently began thinking again on safety culture," he says. "I was at a plant that went to extremes to communicate and emphasize safety. I didn't get the feeling that they were all that safe. So I am still trying to determine what it is that makes a safety culture. I think of a Dilbert cartoon where it was said that if you have to have a 'name' for it, you probably don't have it."

And What about Security?

"Back in the old proprietary days," Scott Hillman says, "you didn't talk much about cybersecurity when discussing plant security. Today, cybersecurity is at the forefront of the conversation largely due to the advent of open systems some 15 years ago. When we put open systems into the control environment, it resulted in much greater risks, and hence, the need for more effective cybersecurity measures."

"I believe," says Joel Langill, a TŰV-certified functional safety engineer and industrial security consultant for Englobal Automation (www.englobal.com), a major EPC firm located in Houston, "that there is a great deal of synergy between functional safety and industrial security. This is demonstrated not only by ISA's creation of a joint working group between ISA84 (safety) and ISA99 (security), but also by several industry trends, such as the merger of exida and Byers Research. Both disciplines address the protection of assets through risk reduction."

Functional security researchers like John Cusimano of exida and Joe Weiss of Applied Control Solutions (http://realtimeacs.com) and author of Control's "Unfettered" blog and the newly published book, Protecting Industrial Control Systems from Electronic Threat (Momentum Press) continue to point out that there is a long way to go.

"Vendors have made improvements in the level of security in their products by closing some ports," Carl Moore says, "but they haven't fully implemented ANSI/ISA99.00.01.2007, nor have the onsite oil-and-gas users. Most locations think a good firewall is enough to stop malicious hackers, but evidence has proven otherwise. And sites still insist on having dial-in remote troubleshooting for vendor support, and this always leaves a path for malicious hackers."

So What Should We Do About This?

Is functional safety and security an insoluble problem? Scott Hillman thinks training is one of the answers. "I don't think it is possible to develop a safety and security culture without training. Lives are on the line, and these are procedures that must be drilled into every single plant employee. You might install the fanciest equipment in the world, but if you put it all in the same window in front of the operators without training them how to read or respond to the data, it will undoubtedly lead to chaos. So training is a very critical part of the equation."

Carl Moore agrees. "Both safety and security training are vital for all process-related plants," he says. "Management often understands the safety piece of this, but rarely do they understand the security piece."

Managing risk in complex systems like the process industries is a dynamic process, incorporating safety systems, security systems, product design and ongoing training. But it all starts with management. There is an old Yiddish proverb, "A fish stinks from the head down." In the 1960s, the Dow Chemical Company's Levi Leathers realized this when he mandated operating safely as Dow's primary mission. Dow's "stateful control" systems and safety culture have made the company one of the safest in the petrochemical industry. Leathers showed that if management insisted on a specific standard of operations behavior, it would become cultural and ingrained in the operating practices of the company. That's the first step in achieving a safety and security culture and making it work for the long term. "When a company has a publicly stated goal of 'Safety is our number one priority,'" Paul Gruhn says, "ask the plant manager if he'd be willing to live on the property with his family. Actions speak louder than words."

Leaders relevant to this article: