Common engineering idealizations are often fully adequate representations of nature, and are useful for design or analysis. But recognize that they're human conveniences, which are chosen to balance simplicity with performance. Desirably, the error caused by simplification is smaller than the error related to the “givens” or uncertainties in the context, making the idealizations fully adequate. But it is not always so. Be aware of the impact of idealizations when you're using models and data. Here are some common idealizations in process modeling and control.

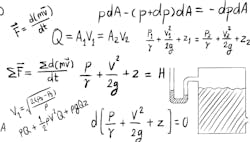

The Bernoulli velocity exponent in ideal flow is 2, which is used in friction losses in pipes and fittings, and leads to the square root functionality in orifice equations and in the control valve flow response to ∆P. Somewhat better coefficients for matching measurements are the 1.8 and 0.52 exponents. However, considering the effects of turbulence noise on measurements, installation artifacts and uncertainty on friction factors, the ideal 2 and 0.5 exponents are often fully adequate.

There are many other approximations, such as the ideal gas law model’s P, V and T relationship. Activity coefficients are often assumed to be unity. Antoine’s equation and Raoult’s law relate vapor and liquid composition. Homogeneous reaction kinetic laws and the Arrhenius model for temperature dependence are common. These are all asymptotic limits for low pressure, low dilution or very high flow rate conditions. But, of course, the error they introduce may be tolerable for the application.

A valve may be marketed as having an equal-percentage relation between valve flow rate and stem position. If the characteristic is true, the valve can never close. Real equal-percentage valves have a modified characteristic at near-closed conditions.

Constant density is often presumed, despite the effects of temperature, pressure, composition, entrained gases, dimerization, etc.

Are liquids and gases pure? The reality is there are dissolved, non-condensable gases in liquids, and entrained mist or particulates in gases. Cavitation and choked flow might be due to gas effervesce rather than the classic liquid vapor-pressure explanation.

Can a process be first-order-plus-deadtime (FOPDT)? This model may have utility for tuning controllers and dynamic compensators, but I’ve never seen it be true to reality. I think SOPDT models are much truer to real process behavior, but their complication in designing control algorithms doesn't seem to have sufficient benefit to compensate for the effort.

When, and when not, to idealize

Device calibrations are perfect (because our techs are the best anywhere). So, you can be assured that a 50% controller output means 12 mA to the i/p device, means 9 psi to the actuator, means a 50% stem position.

Calibrations are linear. This is defended by the perfect knowledge that responses are linear. Measurements are true. Future economic predictions can be based on today’s prices and costs. The volume of liquid in a tank is height times cross sectional area. Indentions, ribs, exit drain lines and bottom curvature can be ignored. Adiabatic, isothermal and isentropic are all achievable. Plug flow happens in packed columns and pipes.

When you're using such conveniences, be sure you understand the implicit presumptions, and can validate that the error caused by the idealization is negligible relative to the uncertainties that you can't control (such as the basis for the design, or givens in the situation, or proximity to a constraint). The use of an idealization can't be defended because 1) it makes analysis simple, or 2) it's commonly used, or 3) it's what the modeling software experts provided, or 4) "we’ve always done it that way." A simplification needs to be defended by utility—the improvement of convenience is large relative to the impact on error.

On the other hand, don’t claim that a more rigorous approach should be used when the convenient method is adequate. Balance perfection with sufficiency.

About the Author

R. Russell Rhinehart

Columnist

Russ Rhinehart started his career in the process industry. After 13 years and rising to engineering supervision, he transitioned to a 31-year academic career. Now “retired," he returns to coaching professionals through books, articles, short courses, and postings to his website at www.r3eda.com.