Béla Lipták, PE, control consultant, is also editor of the Instrument Engineers' Handbook and is seeking new co-authors for the for coming new edition of that multi-volume work. He can be reached at [email protected].

The Safety Integrity Level

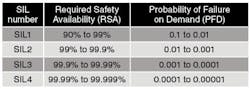

The SIS standard considers SIL to be a quantifiable measurement of risk that can be used as a way to establish safety performance targets. The potentially achievable levels of reliability of the expected performance of this safety system are defined by Table 1.

The required safety availability (RSA) value refers to the reliability of a particular safety control loop (called a safety instrumented function or SIF) to protect the process from accidents. Conversely, the probability of failure on demand (PFD) is the mathematical complement of RSA (PFD = 1 - RSA), expressing the probability that the SIF will fail to do its job. Unfortunately, it's much easier to write three zeros in a table than to increase the safety of a real process a thousandfold.

Yet, when a CEO of an insurance company sees this table with all those zeros, particularly if at the same time he is having a nice business lunch with this charming salesman, the table looks pretty good, and by the time the coffee is served, he might agree to insure the plant if it's designed for a target of, say, SIL3. Right? Well, let's look at this closer.

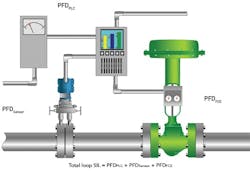

Figure 1: Loop components do not have SIL levels, only probability of failure on demand (PFD) values, and these PFD values do not determine the SIL level of the loop (as implied here), but only indicate that in the supplier's judgement, these components are suitable for being used in a particular SIL level system.

Based on a drawing from Emerson Process Management.

See Also: Risk Assessment Skills Needed for SIS Success

Safety at the Component Level

As shown in Figure 1, at the individual instrument component level (sensor, valve, safety control logic, power supply, communication), the standard only requires determination of PFD, but the components themselves don't have safety integrity levels. The main problem with the PFD values is they're determined by self-certification by the manufacturer or by the manufacturer's hired evaluation firm, and this "certification" doesn't need to be approved by any safety authority. Also, component PFDs don't determine the SIL level of the loop. They only imply that the loop components are suitable for a particular level.

On top that, the standard doesn't even apply to pneumatic or hydraulic logic systems, nor does it apply to fire and gas systems, safety alarms, safety controls or to plants that were in operation before 1996.

SIL Level of a Loop

The SIL level of a loop is not the sum of the PFDs of its components, but is the product of the loop's safe failure fraction (SFF) and the PFDs of the loop components. The equations for calculating SFF, PFD and SIL are:

SFF = (lSD + lSU + lDD) / (lSD + lSU + lDD + lDU)

PFD = (lDU)(Proof Test Interval)/2 + (lDD)(Down Time or Repair Time)

SIL = (SFF)(PFD)

Table 1. A SIL level is a quantifiable measurement of risk used to establish safety performance targets.

I will not bother to list what each of the terms in these equations mean or explain how they can be determined. I will only note (obviously jokingly) that to apply them takes the collaboration of an IRS accountant and a rather "flexible" consultant, whose conclusions might just happen to coincide with the plant's views.

SIL and Our Manual Culture

According to one survey, 70% of furnace explosions occur during startup and shutdown, when operator involvement is maximum, and 21% occur because undocumented changes were made by the operators after commissioning. Only 9% of accidents were due to non-operator-related causes.

I've written a lot about the need for overrule safety control (OSC) for critical processes. The key difference between SIS and OSC is that OSC overrules! In other words, it brings the plant into a safe state no matter what the basic control system or the operators do.

SIS doesn't do that because the committee that developed it still lives in a "manual culture." They still trust men more than machines. They do not understand that OSC is also made by men, except that the men who design the OSCs are not panicked operators running around in the dark at 2 a.m., but professional control engineers, who had months to identify all potential "what if" sources of possible accidents and evaluated their potential consequences before deciding on the emergency actions to be triggered when they arise.

It is this hazard and operability (HAZOP) study during the design phase that is the key to safety. It must be conducted by a team whose members are fully familiar with the process from their diverse perspectives, including chemical, mechanical, heat transfer, electrical, etc. In addition, the team should be lead by a process control engineer who is knowledgeable about the state of the art of safety automation. This what-if analysis (fault tree analysis) is the key, and SIS standards committees are a long way from understanding that.

BPCS: basic process control system

ESD: emergency shutdown down

FMEA: failure modes and effects analysis

FMECA: failure modes, effects and criticality analysis

FGS logic: fire and gas system logic

FOD: failure on demand (PFD)

F&G: fire and gas

FT: fault tolerance

HAZOP: hazard and operational studies

HIPS: high-integrity protection system

IEC: International Electrotechnical Commission

IEC 61508 is generic functional safety standard

IEC 61511 defines good engineering practices for SIS

IPF: instrument protective functions

ANSI/ISA 84.00.01 (2004): IEC 61511 relaxed for pre-996 plants

LOPA: layers of protection analysis

OSC: override safety controls

MOC: management of change

PFD: probability of failure on demand

PHA: process hazard analysis

RL: reliability level

RSA: required safety availability

SFF: safe failure fraction

SH&E: safety, health and environmental

SIF: safety instrumented function

SIL: safety integrity level

SIS: safety instrumented system

UPS: uninterruptible power supply

What Else?

What's probably the worst aspect of SIL ratings is that they do not apply to entire unit operations such as boilers or distillation columns. In fact, it's quite possible that a boiler with a SIL3 steam overpressure protection system can also have a SIL1 low water level protection loop.

It's also unfortunate that the SIS committees don't like plain English. Their work is peppered with high-tech buzzwords, abbreviations and acronyms that make these documents harder to read and hence less valuable.

In January, Part 2 will outline the safety system standards that we really need.