...to Tango

Data Collection and Optimization, Part 2

See Part 1, It Takes ...

By Jim Montague, Executive Editor

Another dance? Mais oui. Faster this time? I guess. So you might think that coaxing a few precious nuggets of useful data out of your application would be the hardest part of data collection, and that all you need to do to optimize your process is plug them into it. Sorry, Mr. Astaire.

In fact, plowing intelligence back into a process to make improvements can be even harder than finding it in the first place. Besides choosing and implementing the right hardware and software, there are many other jobs that all need to be done right for optimization efforts to really pay off. Probably the two most important of these are developing models and model-predictive controls that genuinely reflect the application in which theyre going to be used, and then training operators, technicians, engineers and managers to make those controls part of their daily work routines.

Instilling Stability

Prohibition may be over, but making alcohol is still risky. For example, the East Kansas Agri-Energy ethanol plant in Garnett uses a six-step, dry-mill process to ferment corn into sugar and then distill it into alcohol, and annually produces 35 million gallons of 200-proof ethanol, 68,000 tons of dried distillers grains with solubles (DDGS) and 110,000 tons of wet distillers grains with solubles (WDGS). Doug Sommer, EKAEs plant manager, reports that controlling the cooking process is difficult because of disturbances from in-plant water recycling. Added challenges come from trying to maintain consistent DDG and syrup-solids moisture levels.

More recently, increasing ethanol demand pushed EKAE to move from a half-week to a full-time production schedule. To increase its dryer capacity by 4% to 6%, reduce energy use by 2.5% to 3% and increase ethanol production via more stable plant control, EKAE enlisted Rockwell Automation subsidiary Pavilion Technologies to help implement an advanced process control (APC) system for the plants milling, cooking and stillage-dehydration sections. This APC system is based on a nonlinear, model-predictive control (NMPC) method, a control algorithm based on a dynamic-process model of EKAEs process, so it can predict and optimize future responses. EKAEs models combined fundamental and empirical models derived from plant measurements.

As a result, EKAEs APC system includes two controllersone configured for the milling and cooking section and a second for the stillage-dehydration section. The objectives for the milling/cooking section were to balance load and energy between mills for efficient milling; increase feed to beer column to increase ethanol production; manage fermentation inventory with the water balance; control liquefaction and slurry solids percentages to reduce disturbance to the fermenters; and control backset percentage to ensure a constant mineral feed to the fermentation process to maintain consistent water quality in the fermentation feed. Goals for the stillage-dehydration section, including centrifuges, evaporators and dryers, were to balance load distribution between centrifuges; decrease energy use in ethanol production; control dryer moisture for consistent DDG product quality; reduce average thermal oxidizer (TO) hotbox temperature while maintaining temperature within environmental limits; and stabilizing steam pressure within the TOs operating limits.

To accomplish these objectives, Sommer says liquefaction, evaporator solids and dryer moisture were controlled to meet an operator-determined target using values predicted in real time from virtual online analyzers (VOA) developed by Pavilion. Likewise, backset flow was manipulated by the water balance controller, and the trajectories were sent to the slurry/stillage controller. Slurry solids were controlled by manipulating corn and water amounts to the slurry tank. The operator decided on a target value for liquefaction solids. The application calculated the slurry solids target value required to achieve the desired consistency of the liquefaction solids. The application included virtual online analyzers for slurry, liquefaction and syrup solids, as well as dryer product moisture. These analyzers in turn provided real- time calculations to the controller.

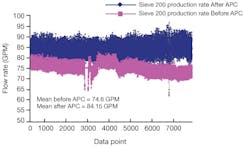

Before APC was installed at EKAE, its beer feed flow rate average was roughly 511 gpm. After APC was added, beer feed flow rate increased 3.6% to an average of 529.3 gpm. In addition, 190-proof production was 91.4 gpm on average before APC, and it increased to 104.5 gpm after APC. Most important, the average 200-proof flow to storage increases from 74.6 gpm to 84.2 gpm, which represents a 12.8% increase in ethanol production (Figure 1). Also, energy use of steam in lbs/gal of ethanol improved approximately 9.7%.

Figure 1. East Kansas Agri-Energy (EKAE) used advanced process control (APC) to increase its production of 200-proof ethanol by 12.8% from 74.6 gpm (pink line) to 84.2 gpm (blue line).

Besides its quantitative benefits, NMPC also gave EKAE automation with the following advantages: Models dont require major adjustment unless major modifications to the process are carried out; process is kept stable during fluctuating conditions, such as changes in the weather or upsets in the distillation section; and better control of the fermentation/beerwell inventories makes it easier to manage the milling rate with the beer feed target rates. This stability increased the plants ability to push production rates to capacity.

The APC project allowed us to achieve record production rates by finding optimal process operating conditions. This reduced the amount of energy needed per gallon of ethanol produced, says Sommer. With the APC application, EKAE was able to overcome operational constraints that limited plant production efficiency.

Reflecting Real-Time Reality

A picture may be worth 1,000 words, but it also can be a useful model thats worth a pile to savings. For instance, Jura Cements Wildegg plant partnered with ABB Switzerland in 2005 to integrate model-predictive control (MPC) into ABBs Expert Optimizer 5.0 software and extended it to include Mixed Logical Dynamic (MLD) systems. ABB says combining MLD modeling with an MPC system can make short-term predictions about process evolution. Jura uses ABBs System 800xA as the control for its main plant, but it also integrates Siemens S7s Cemat library into 800xA by accessing data via Siemens-based OPC servers and an ABB Cemat Connect library.

In Juras cement mills, MPC and MLD can evaluate the merits of different control decisions and then select and implement the best decisions for the future. Also, the MLD mill model is constructed in Expert Optimizers graphical model building toolkit, so it gives veteran operators a clear representation of the real systems relationships with its components. After the success of the first mill operating with Expert Optimizer 5.0s MPC and MLD control, Jura decided in May 2008 to extend the software to its other mills.

Figure 2. Mechanics, shift supervisors and managers at National Grid get visual KPIs from their electric and gas facilities using Transparas displays from other software data sources.

Reaching Up to the Enterprise

Once optimization and MPC tools begin to achieve efficiencies, and their users get familiar with them, it seems like they almost involuntarily seek out other applications to improve, including those leading up the management and enterprise ladder.

To extract inaccessible DCS data needed to fix its $250,000 phase separator and keep a production unit from wearing out pumps too fast, Arkema Chemicals in Calvert City, Ky., deployed OSIsofts PI System software about six years ago and achieved a $1.8-million, one-time savings and annual savings of $590,000. The plant makes Forane refrigerants for automotive air conditioners, other blended refrigerants and Kynar polymers.

Dwight Stoffel, Arkemas principal plant electrical instrumentation engineer, says it took three days to install PI, and it provides a common point of view, necessary decision points and easy access to the plants disparate information sources. As a result, staffers in every department use several PI client tools, including ProcessBook for graphical visualization, trending and analysis and DataLink for Excel-based reporting. Personnel in maintenance, logistics, engineering, the laboratory, accounting, process technology and operations have access to PI information, and they all use it to do their jobs better and optimize processes, says Stoffel.

Modeling to Simulation to Training

Because plant dynamics, such as feedstock levels and downstream flows, are so complex to coordinate, the real beauty of an MPC system is that it takes information from an applications other models and then makes changes at the exact right time to increase that applications throughput.

For example, John Ragone, plant process optimization manager at National Grid, says the utility tries to give its technicians and managers actionable information in role-based formats, so they can make more efficient and timely decisions. National Grid has 6,650 megawatts of generating capacity, about 4.4 million electricity customers in New England and New York State, and more than 3.4 million gas customers in the U.S.

The two pillars of our information architecture are OSI PI and SmartSignal, and we use them for performance monitoring, predictive maintenance monitoring, DCS historian and analysis, e-notification, control room unit dispatch, energy trader analysis, fuel management and cellular access to real-time data, says Ragone. This means mechanics, performance engineer, shift supervisors and traders receive key performance indicators (KPIs) pre-assigned for their specific jobs from original data sources via cellphones, Blackberries and other handheld tools using Transpara Corp.s business intelligence (BI) visualization software.

So far, Ragone adds, National Grid has saved more than $11 million, thanks in part to its role-based data display tools, mostly in heat-rate savings, ISO penalty disputes and testing, DCS historical database improvements, and identifying critical points on a fluid drive. So, can I lead next time?

Jim Montague is Controls executive editor

How to Optimize Your ApplicationMaina Macharia, Pavilion Technologies project engineering manager, says there are several basic steps in undertaking most process control optimization projects.

|